NVIDIA Cosmos 2026: Open AI That Teaches Robots Physics

Authors: Casual Craft Science

Imagine a robot looking at a falling glass. Instead of just registering shifting pixels on a camera feed, it understands that the glass will shatter upon impact and intuitively calculates the trajectory of the shards. This level of physical intuition is the promise of NVIDIA Cosmos, unveiled by Jensen Huang at CES 2025 and significantly expanded at CES 2026. The announcement is already being heralded as the «ChatGPT moment for robotics.»

The Long Road to Physical Intelligence

Historically, artificial intelligence has evolved within a virtual vacuum. Large Language Models learned to juggle words masterfully, and image generators mastered pixels. However, when algorithms attempted to interact with the messy, real, physical world, they stumbled over fundamental concepts: gravity, friction, and inertia.

For a long time, training robots required an immense amount of real-world trials, often resulting in broken, expensive equipment. Previous attempts to shift training into simulators (sim-to-real transfer) collided with the reality gap — algorithms that performed flawlessly in sterile simulations would fail utterly when faced with a slight change in lighting or a lens flare in the real world.

World Foundation Model (WFM) A foundational world model — a neural network trained on vast amounts of physical world data (video, 3D scenes, trajectories). It «understands» the laws of physics and can predict how a scene will change seconds into the future, analogous to how a language model predicts the next word.

Inside the NVIDIA Cosmos Paradigm

NVIDIA Cosmos is not just a single neural network; it is a massive ecosystem for real-world simulation. At its core are World Foundation Models (WFMs) — foundational world models that are open for fine-tuning.

The breakthrough centers around a triad of new models:

Cosmos Predict A generative world model that creates high-fidelity video (up to 30 seconds) from multimodal inputs: text, images, and video. Cosmos Predict 2.5 is a unified Text2World/Image2World/Video2World model trained on 200 million video clips. It allows robots to «foresee» the consequences of their actions before executing them.

Cosmos Transfer A «style transfer» neural network that ingests structured inputs from simulations (segmentation maps, depth maps, LiDAR scans, pose estimations) and generates controllable photorealistic video, effectively bridging the reality gap between virtual and real environments.

Isaac GR00T A system for generating synthetic movements using GR00T-Dreams and GR00T-Mimic architectures. It learns from human demonstrations and scales via Cosmos to create motion trajectories for various robot embodiments.

Official NVIDIA Cosmos video: a platform for physical AI. Source: NVIDIA

The Digital Forge of the Future

How does this work in practice? A robot developer no longer needs to spend months testing prototypes on a physical track.

Instead, a virtual twin of the environment is created within the Cosmos toolset (built atop Omniverse). The required tasks are simulated. Then, Cosmos Transfer «paints» the simulation with thousands of visual distortions, simulating all possible real-world conditions (rain, harsh backlighting, dirt on camera lenses). Using this hyper-realistic data, the robot trains via Cosmos Policy to control its motors, while Cosmos Predict helps it plan sequences of actions by anticipating object reactions.

Additionally, NVIDIA is developing Cosmos Reason — a module for analytical reasoning and planning — along with Curator and Tokenizer tools for data processing.

Why This Changes Everything

Access to open «world perception» models democratizes robotics. Previously, building advanced AI for autonomous systems required data centers on the scale of Tesla or Boston Dynamics. Now, NVIDIA is offering an off-the-shelf «physics engine» — a foundational brain that only requires fine-tuning for a specific robot dog, forklift, or anthropomorphic assistant.

This will significantly accelerate the deployment of machines in factories, warehouses, and eventually, our homes. In spring 2025, the Isaac GR00T package already enabled the creation of synthetic motion datasets; Cosmos supplements this with a full-fledged digital world for training.

A Critical Analysis

Cosmos is a proprietary corporate platform, not a peer-reviewed scientific study.

Strengths:

- An elegant solution to the «reality gap» through synthetic data stylization (Cosmos Transfer).

- Open models (WFMs) supporting fine-tuning — lowering the barrier to entry for startups.

- A layer built atop the already popular Omniverse/Isaac ecosystem with an active community.

Limitations:

- The technology still heavily relies on video generation. Predicting physics via frame generation may contain hidden «hallucinations» (e.g., incorrectly estimating the mass of an object).

- No public data on edge computing requirements — these «smart» algorithms will demand powerful chips onboard the robots.

- Lock-in to NVIDIA’s proprietary hardware ecosystem — dependency on GPUs and SDKs.

Open Questions: How well will Cosmos models handle extreme corner cases that were absent from the training dataset — particularly in chaotic urban environments?

What’s Next

The immediate next phase involves the practical adoption of these models by startups and research labs. Over the next year, we are likely to witness an avalanche of new robots from companies utilizing Cosmos as the bedrock for their machines’ «physical consciousness.»

References

Context

Related Articles

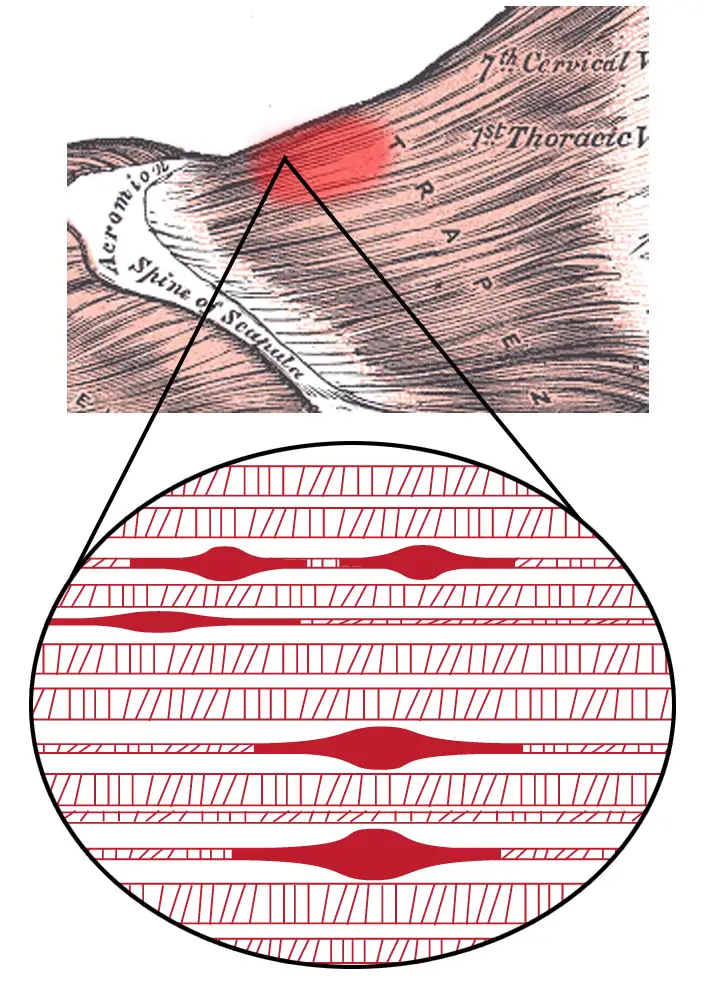

Muscle Knots: 2024 Trigger Point Breakthrough

Why muscle knots form, how they harm your health, and how to treat them — featuring the molecular pathway discovered in 2024.

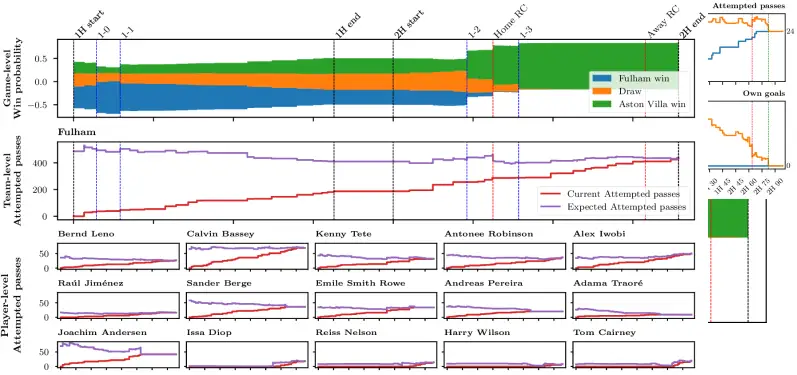

75,000 Predictions Per Match: AI in Football

Stats Perform researchers built an Axial Transformer neural network that generates 75,000 live predictions per football match with sub-second latency

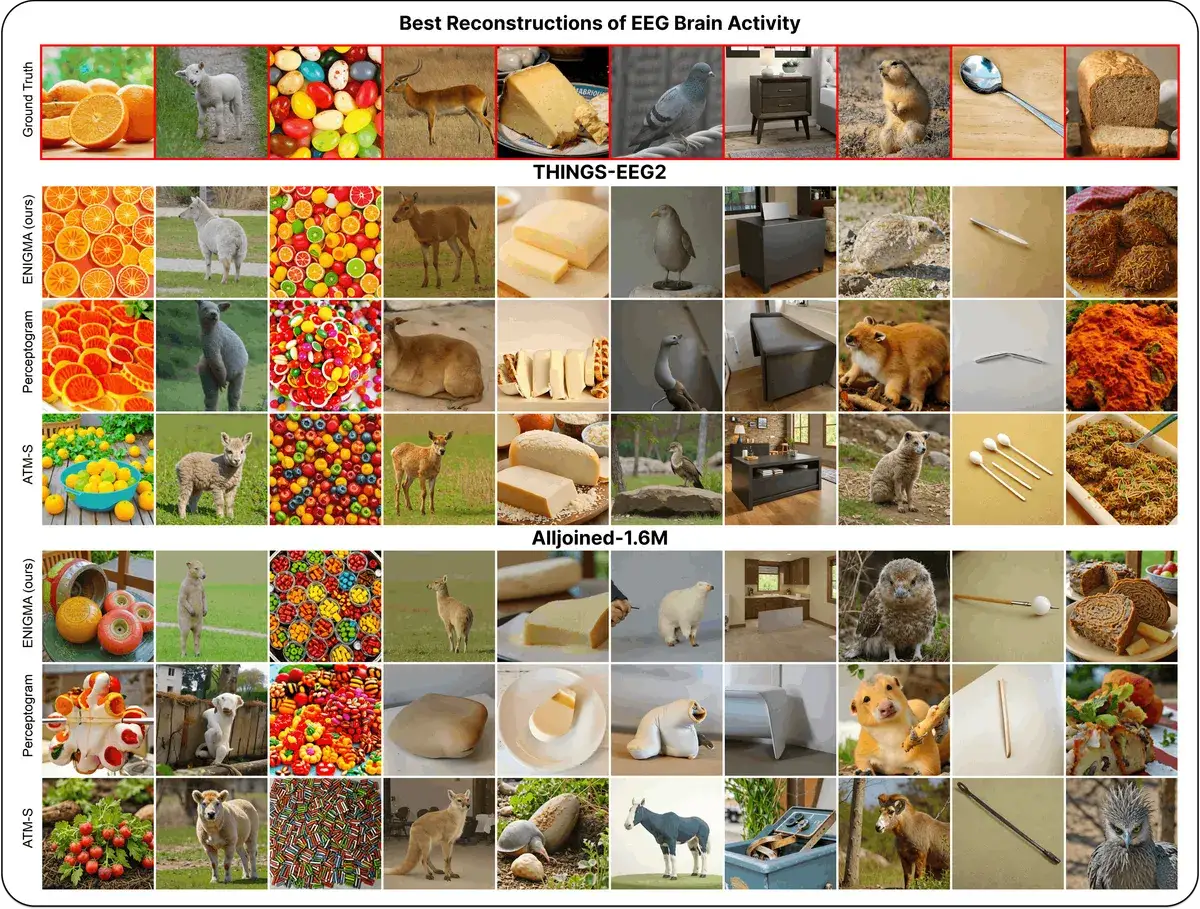

ENIGMA: Reading Minds in 15 Min via EEG

ENIGMA reconstructs images from EEG signals after 15 min of calibration, using under 1% of previous methods' parameters.