Neuralink Gave an ALS Patient His Voice Back

Authors: Neuralink

Kenneth Shock used to coach his kids through Mario Kart races. Then ALS took his voice. By the time he received a Neuralink brain implant in January 2026, he had been nonverbal for years. A few weeks later, he was talking again — not typing on a screen, not blinking at a letter board, but speaking out loud in his own voice, reconstructed by AI from recordings made before his disease progressed.

When the system said «I love you» for the first time using his old voice, his wife Sheryl cried.

This is not science fiction anymore, though it isn’t quite routine medicine yet either. Here is how it works.

A Chip That Reads Intentions

Brain–computer interface (BCI) is a device that records electrical signals from the brain and translates them into commands — moving a cursor, typing text, or in this case, producing speech. The brain generates measurable patterns even when the body can no longer act on them.

The Neuralink N1 implant is a coin-sized chip placed on the surface of the motor cortex — the brain region responsible for planning movement, including the movements of the tongue, lips, and vocal cords that produce speech. It carries 1,024 electrodes distributed across 64 ultra-thin, flexible threads, each thinner than a human hair. A surgical robot called R1 inserts the threads with micron-level precision to avoid blood vessels.

Those electrodes pick up electrical signals in the microvolt range — tiny voltage fluctuations produced by neurons firing. The chip amplifies, digitizes, and wirelessly transmits this data at over 200 megabits per second. No wires exit the skull.

From Neural Sparks to Spoken Words

When you think about saying a word, neurons in your motor cortex fire in a specific pattern — even if your muscles can no longer carry out the movement. The pattern for «hello» differs from the pattern for «water.» This is true for ALS patients whose motor cortex remains intact even as the neurons connecting brain to muscles degenerate.

Phoneme is the smallest unit of sound in a language. English has about 44 phonemes. The word «cat» has three: /k/, /ae/, /t/. BCI speech systems decode neural activity into phonemes first, then assemble them into words.

Neuralink’s speech decoding works in stages. First, the patient practices speaking aloud while the system learns which neural patterns correspond to which sounds. Then the patient mouths words silently. Finally, the patient only thinks about speaking — and the system decodes the intended phonemes directly from brain activity.

A machine learning model maps these neural patterns to phonemes, stitches phonemes into words, and feeds them to a text-to-speech engine. But the output does not sound like a generic computer voice.

The Ghost of a Voice

Here is the detail that makes this story personal rather than merely technical. Before ALS silenced him, Kenneth Shock had been recorded — home videos, voicemails, casual audio from 2020. Neuralink’s team located those recordings and used them to train an AI voice-cloning model. The result is a synthetic voice that sounds like Kenneth did before his disease progressed. The team calls it «Original Ken.»

So the pipeline runs like this: Kenneth thinks of what he wants to say. His motor cortex fires. The N1 chip captures the signal. A decoder turns it into phonemes. A speech synthesizer produces the words — in Kenneth’s own voice.

AI voice cloning takes a sample of someone’s speech (as little as a few minutes of audio) and builds a model that can generate new speech in the same voice, with the same timbre and cadence. It does not play back recordings — it synthesizes new sentences that were never spoken.

The system is not instant. There are noticeable delays between thought and output, and decoding errors occur. «We want to build a system that goes directly from the brain to voice in real time, ” said the project lead in an update — acknowledging that the current version falls short of that goal.

Where This Sits in the Bigger Picture

Neuralink’s VOICE trial (registered as NCT07224256) received FDA Breakthrough Device Designation in May 2025, which means the agency considers it a potential advance over existing treatments for conditions like ALS, stroke, and spinal cord injury. Kenneth Shock is among the earliest participants. The trial is an early feasibility study — designed to assess safety and initial effectiveness, not to prove the technology works at scale.

Neuralink has not published peer-reviewed results from the VOICE trial. The demonstration is based on publicly shared video and company statements.

Neuralink is not the only group working on speech BCIs. Academic teams have been at this for over a decade. In 2023, Stanford researchers published a high-performance speech neuroprosthesis in Nature. In 2024, a BrainGate team demonstrated real-time speech synthesis in a chronically implanted ALS patient with 97% word accuracy. A 2025 study showed stable decoding from a speech BCI without recalibration for three months.

What distinguishes Neuralink is the hardware: a fully wireless, high-density implant that requires no external connectors or wired rigs. Synchron, another competitor, offers a less invasive option — a stent-like device threaded through the jugular vein into a brain blood vessel, no craniotomy needed, but with fewer electrodes and lower bandwidth. Paradromics, a third entrant, received FDA clearance to begin human speech-restoration trials in 2025.

Five Questions That Still Need Answers

How long does the implant last? Neuralink’s first patient (Noland Arbaugh, PRIME trial, 2024) experienced partial thread retraction after several months. Whether the N1 maintains stable performance over years is unknown.

Is it really the patient speaking, or the AI? This question surfaced repeatedly in public discussions. The decoder translates neural intentions, but the voice cloning adds a layer of synthesis. For patients without preserved audio recordings, a generic voice would be the only option — raising questions about identity and authenticity.

Can it reach real-time speed? Current delays make natural conversation difficult. Real-time decoding at conversational pace (roughly 150 words per minute) remains the benchmark.

What about emotional expression? The AI voice produces words but struggles with tone, emphasis, anger, or sadness. One observer noted: «Imagine never being able to express anger or sadness in how you talk.» Solving prosody — the melody of speech — is an active research challenge.

Will it ever be affordable? The surgical procedure, the implant, and the ongoing support represent costs that have not been publicly disclosed. For a technology targeting ALS (which affects roughly 30,000 Americans at any given time), the path to broad accessibility is unclear.

Kenneth Shock does not have answers to all of these questions. What he does have, for the first time in years, is a way to tell his kids to stop cheating at Mario Kart — in his own voice.

References

Original

Related

Related Articles

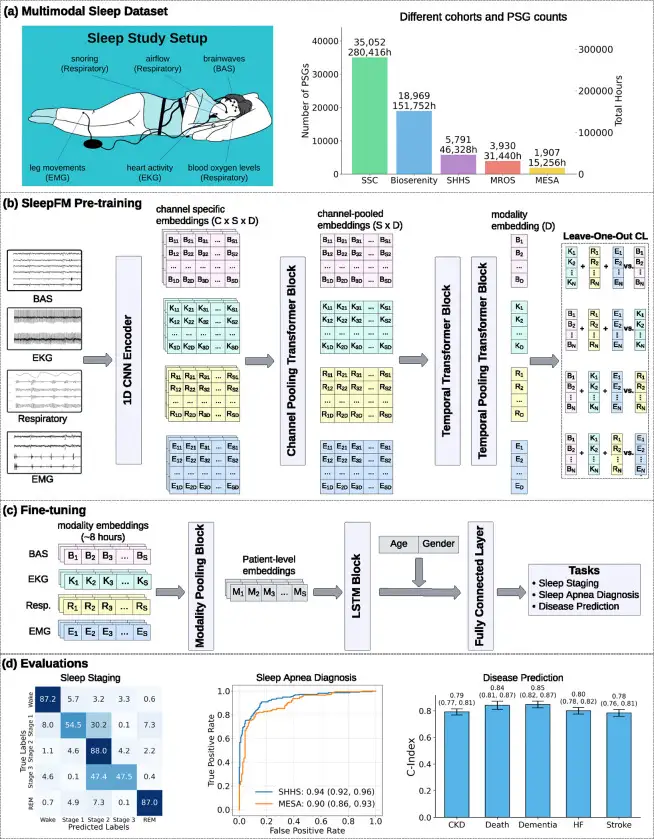

SleepFM: 130 Diseases From One Night of Sleep

Stanford trained a neural network on 585,000 hours of sleep data. It detects Parkinson's, dementia, and cancer years before symptoms appear.

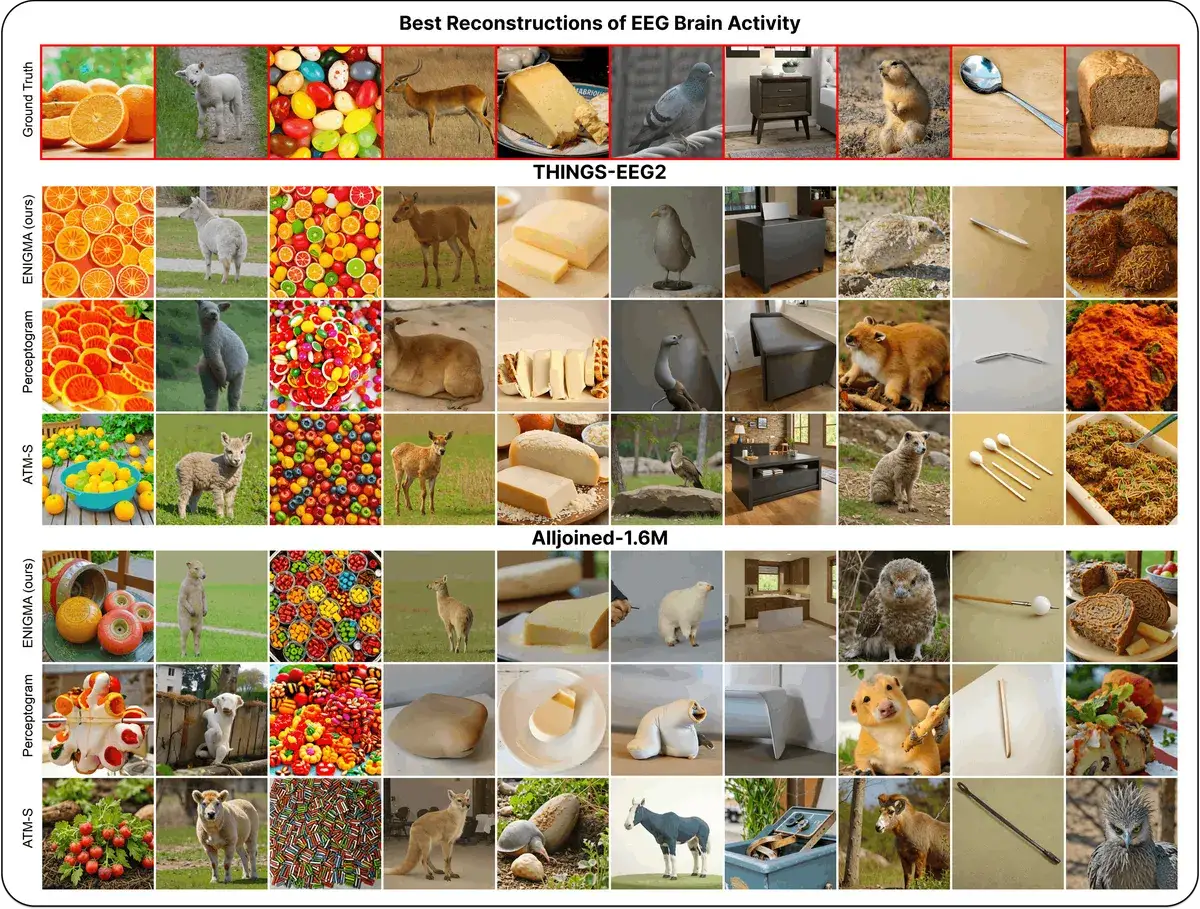

ENIGMA: Reading Minds in 15 Min via EEG

ENIGMA reconstructs images from EEG signals after 15 min of calibration, using under 1% of previous methods' parameters.

Your Liver, Not Your Legs, May Shield Your Brain From Alzheimer's

UCSF scientists found that a liver enzyme called GPLD1, released during exercise, repairs the blood-brain barrier and reverses memory loss in Alzheimer's mice.