Golden Ratio 1.618 Found in How Your Brain Processes Info

Authors: P. Padilla, O. López-Corona, E. Ramírez-Carrillo, A. Hernández Sánchez

Why It Matters

The golden ratio (φ ≈ 1.618) has haunted humanity for millennia — from the Parthenon and Da Vinci’s Vitruvian Man to nautilus shells and galactic spirals. But finding this proportion in seashells is one thing. Discovering it in the very mechanism of thought is quite another.

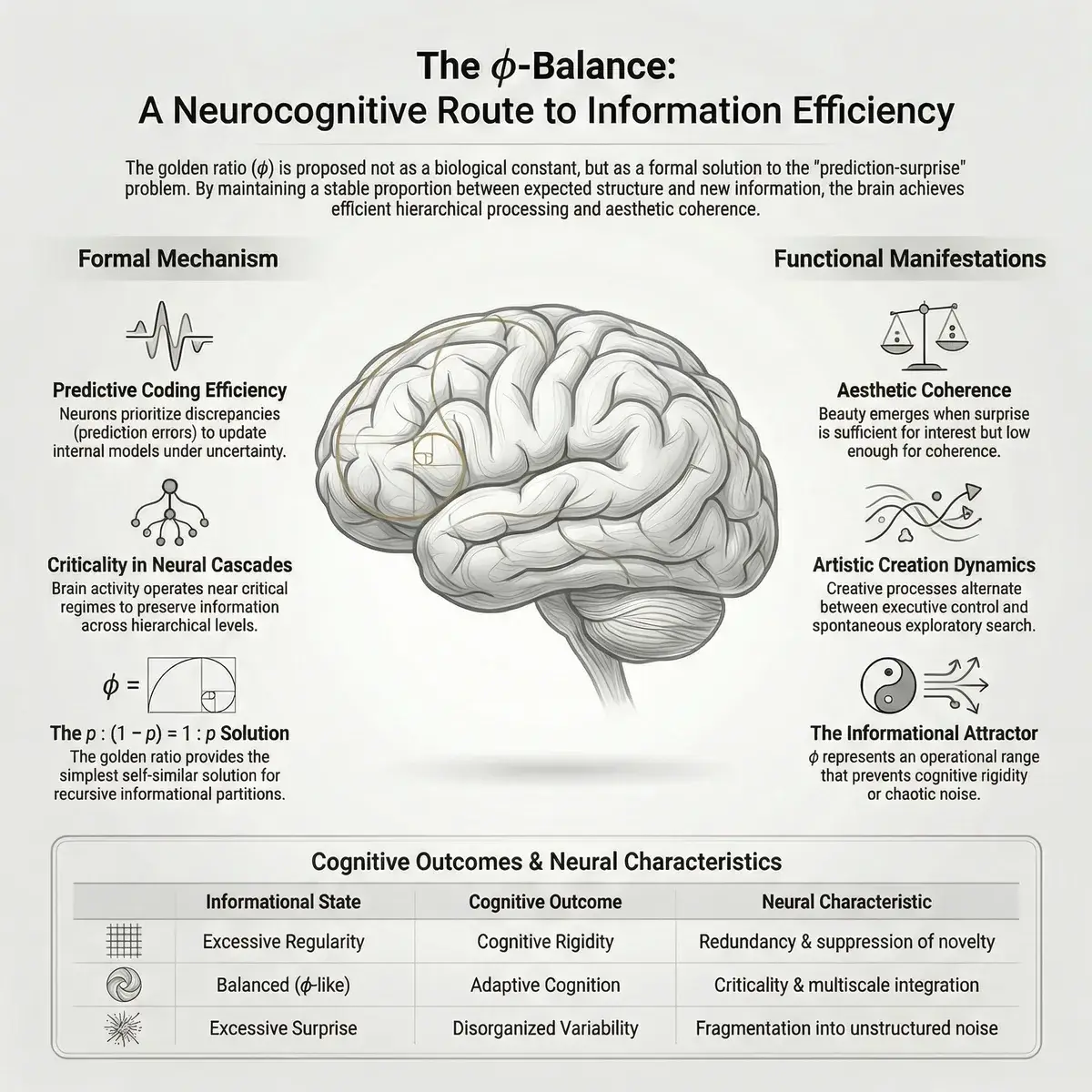

A team of researchers from Mexico and Spain has proposed a mathematical model in which the golden ratio isn’t a coincidence but an optimal strategy for information processing. According to their theory, the brain splits incoming information in golden-ratio proportions: ~62% allocated to the «known» (predictions), ~38% to the «unknown» (surprise). And it’s precisely this proportion that makes us not merely resilient to chaos, but antifragile — capable of growing stronger through stress.

If this sounds like an intersection of mathematics, neuroscience, and philosophy — that’s exactly what it is. Welcome to the most beautiful crossroads in recent science.

The Core Idea

Imagine you’re a head chef. Every day you prepare meals, and you have your tried-and-true recipes (your «known»). But if you only follow recipes, you’ll never invent anything new. And if you experiment with unfamiliar ingredients every day, half your dishes will be inedible.

The authors claim there’s a mathematically ideal proportion between «recipes» and «experiments.» And that proportion is the golden ratio.

Golden Ratio (φ) — an irrational number approximately equal to 1.618. Defined as the ratio where the whole relates to the larger part as the larger part relates to the smaller. In numbers: if you take a segment of length 1, the optimal division is into parts of 0.618 and 0.382.

Antifragility — Nassim Taleb’s term for systems that don’t merely withstand chaos and stress but improve because of them. Fragile things break under impact. Robust things endure. Antifragile things get tempered.

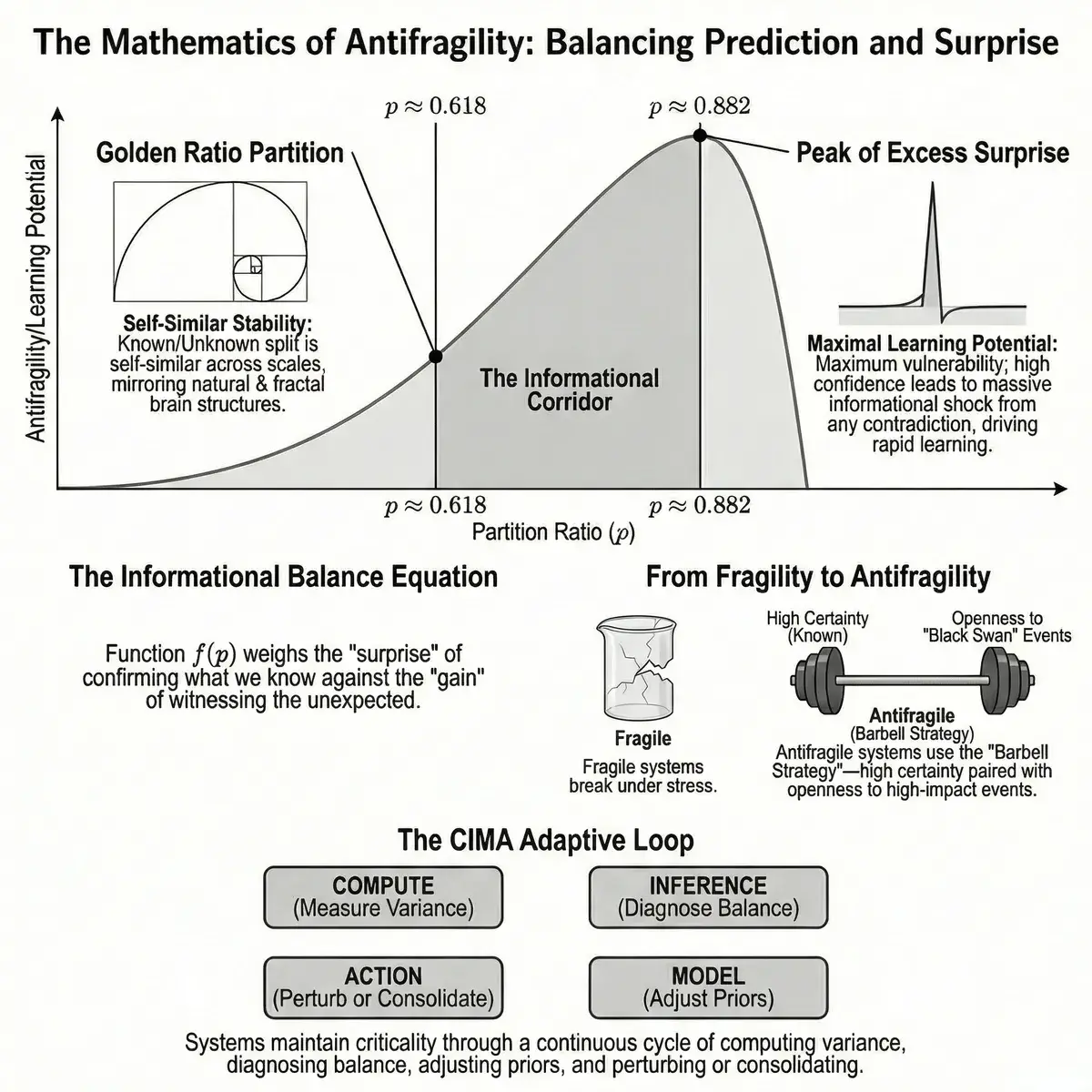

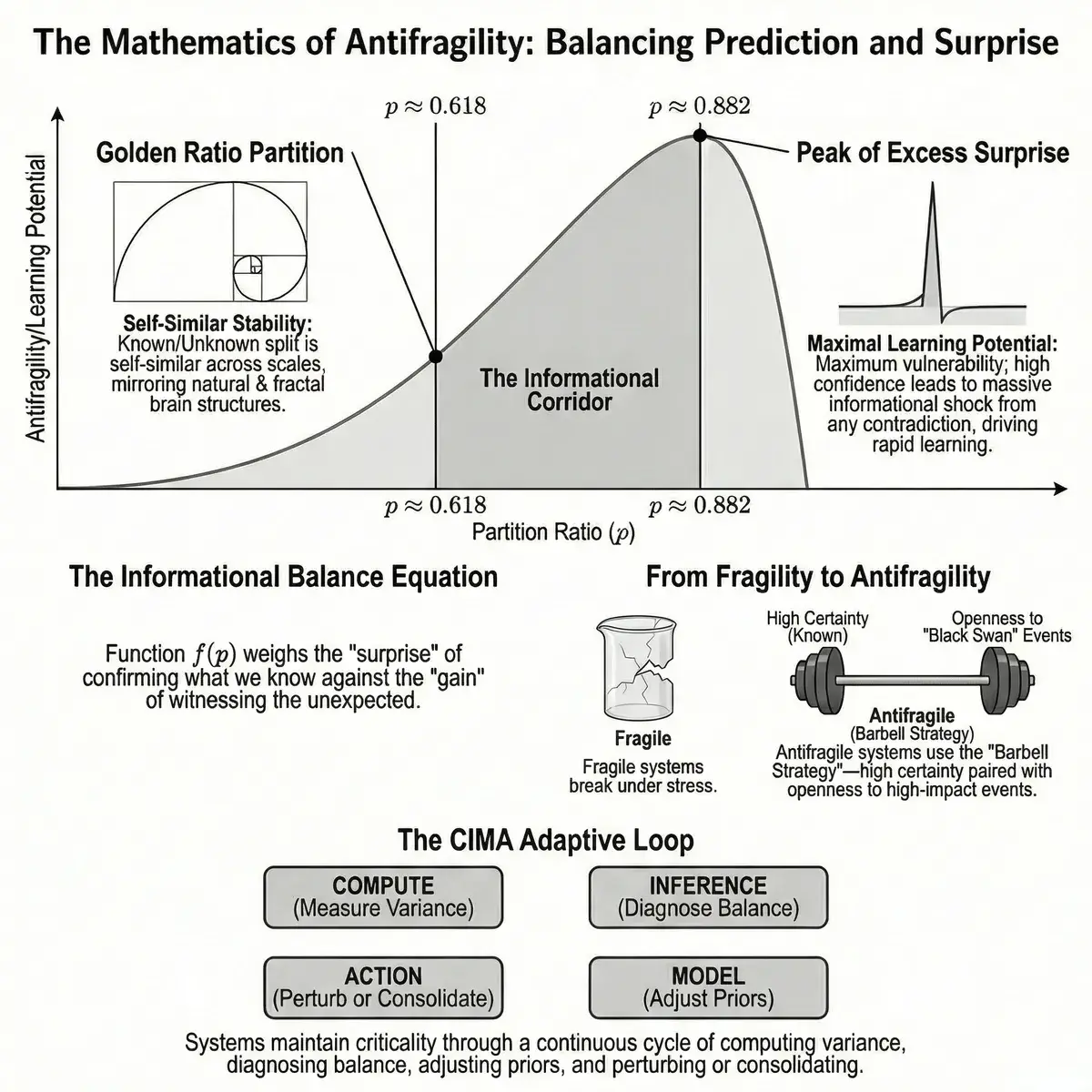

The paper’s central formula is the «balance function» f (p), where p is the fraction of explained information (what the brain successfully predicts). This function measures the net informational payoff from encountering reality: how much new knowledge we gain, minus the cost of revising old beliefs.

How It Works

The balance function consists of two components:

Component A — expected surprise from the unknown: -(1-p)·ln (1-p). This is the potential information we gain if the unexpected happens. The more we admit «not knowing, ” the more we can learn.

Component B — the cost of confirming expectations: +ln (p). A paradox: even when we’re right, confidence isn’t free. Confirming predictions consumes cognitive resources.

Explained Variance (p) — the fraction of incoming information that a model (or brain) can explain using its current knowledge. If p = 0.7, the brain «understands» 70% of what’s happening, and 30% remains a mystery.

This function has two critical numbers:

p* ≈ 0.882 — the function’s maximum. This is the point of «peak vulnerability»: the brain explains almost everything (88%), but the remaining 12% of unknowns can deal a disproportionately large blow. Like a bank confident in 88% of its investments, but the remaining 12% of toxic assets can destroy it.

pφ ≈ 0.618 — the golden ratio. Here, mathematical magic emerges: the ratio of «known» to «unknown» (p: (1-p)) equals the ratio of «known» to «everything» (1:p). This is self-similarity — the same proportion repeats at every level, from individual synapses to entire brain regions.

The CIMA Loop: How the Brain Maintains Balance

The authors propose a four-stage cycle that the brain (or any adaptive system) runs continuously:

- Compute — estimate what fraction of incoming information can be explained

- Inference — determine the current operating regime: too confident or too bewildered?

- Model — adjust internal parameters: boost learning or reinforce existing models

- Action — if overwhelmed by surprise, consolidate proven models; if overconfident, deliberately introduce perturbation

Criticality — a state at the boundary between order and chaos. Physicists call this a «phase transition» — like water at the border between ice and liquid. In the critical state, the brain is maximally sensitive to signals and maximally flexible.

The key insight: optimal operation happens not at a single point but within a corridor between pφ (0.618) and p* (0.882). This «informational corridor» coincides with what neurophysicists call the state of criticality — when neural networks generate characteristic power laws (1/f noise) and neuronal avalanches.

Results

The authors didn’t conduct experiments — this is a theoretical work. But their mathematical framework builds on extensive experimental evidence:

Neuronal avalanches. Beggs and Plenz (2003) showed that spontaneous cortical activity follows power laws — a hallmark of operating near a critical point. The brain balances on a razor’s edge: tilt one way and activity dies out, tilt the other and you get an epileptic seizure.

Maximum dynamic range. Kinouchi and Copelli (2006) mathematically proved that neural networks achieve maximum sensitivity precisely at the critical point — they can respond equally well to a whisper and a shout.

The free energy principle. Friston (2010) described the brain as a machine that constantly minimizes «prediction error» — the gap between expected and actual reality. The authors’ model extends this: the brain doesn’t just minimize error, it maintains it at an optimal level.

Statistical physics. The authors show their formula is isomorphic to the balance between mean-field and fluctuations in phase transition physics. The golden ratio emerges as the boundary where simple mean-field theories break down and true criticality begins — the Ginzburg criterion.

| Parameter | Value | Interpretation |

|---|---|---|

| p* ≈ 0.882 | Maximum of f (p) | Peak vulnerability to errors |

| pφ ≈ 0.618 | Golden ratio | Self-similar optimum |

| 62%: 38% | pφ proportion | Exploitation vs exploration |

| f (p*) ≈ 0.127 | Maximum payoff | Peak informational return |

Critical Assessment

This paper is a preprint and has not yet undergone formal peer review.

Strengths:

- An elegant mathematical model unifying disparate concepts (golden ratio, antifragility, criticality, free energy) into a single framework

- Bridges statistical physics and neuroscience — the physical interpretation through Ginzburg-Landau theory adds theoretical depth

- A concrete operational cycle (CIMA) that can potentially be tested experimentally and applied to AI system design

Limitations:

- The work is entirely theoretical — there is no experimental verification that the brain actually operates near pφ ≈ 0.618. Beautiful mathematics doesn’t guarantee biological reality

- Critics note that the golden ratio in neuroscience often turns out to be «curve-fitted» — studies yield contradictory results, and measuring the irrational number φ in real data is always approximate

- The connection between the balance function’s self-similarity and Taleb’s antifragility (convex response) is not straightforward — the authors transition from concave f (p) to convex Φ(Π) through the CIMA loop, but the rigor of this transition raises questions

Open questions:

- Can the «informational corridor» between 0.618 and 0.882 be experimentally measured in real neural activity?

- Does this principle apply to artificial neural networks — and if so, could it improve AI training?

What’s Next

If the authors’ model holds, the implications extend far beyond neuroscience. The golden ratio as an optimal balance between exploitation and exploration is a principle that could find applications from AI system design to economic risk management.

In the near term, the main challenge is translating this beautiful theory into testable experiments. The authors already outline a path: measure the explained variance fraction in neural recordings (EEG/fMRI) and verify whether the brain truly «prefers» operating in the 0.618–0.882 corridor.

For now, we can simply appreciate the beauty of the idea: the very number that determines the spiral of a sunflower and the proportions of the human body may also determine how we think. The brain isn’t a computer striving for accuracy. It’s an antifragile system that deliberately leaves room for error. Because errors — in the right proportion — make us smarter.

References

Related

- The free-energy principle: a unified brain theory?

- Neuronal Avalanches in Neocortical Circuits

- Optimal dynamical range of excitable networks at criticality

- Self-organized criticality: An explanation of the 1/f noise

- Emergent complex neural dynamics

- Golden ratio brain frequencies and cross-frequency coupling

Related Articles

Your Liver, Not Your Legs, May Shield Your Brain From Alzheimer's

UCSF scientists found that a liver enzyme called GPLD1, released during exercise, repairs the blood-brain barrier and reverses memory loss in Alzheimer's mice.

Big Five Personality: 254 Genes Found, Sixth Factor (2025)

Genome study of 600K people found 254 genes shaping personality. A 6th trait beyond Big Five predicts mortality. Seven 2024-2025 studies reviewed.

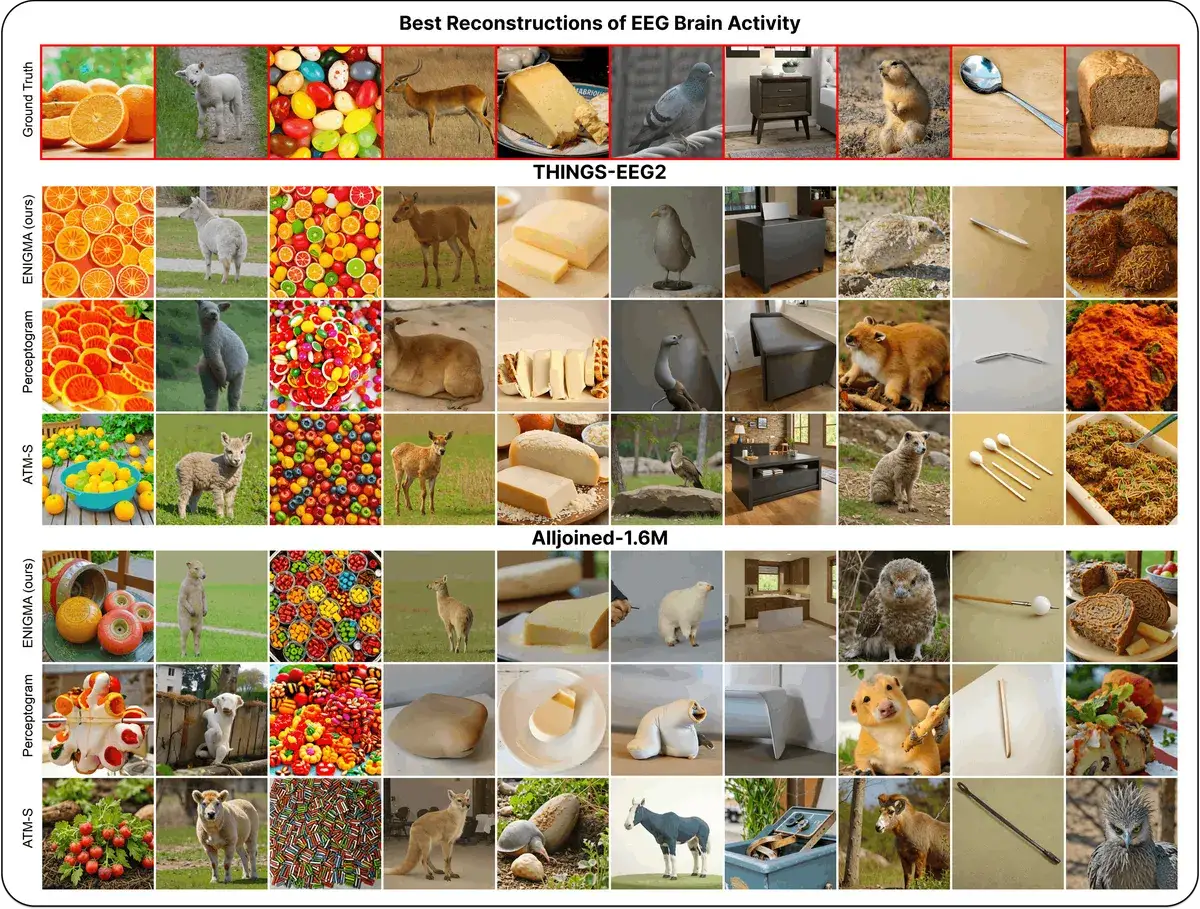

ENIGMA: Reading Minds in 15 Min via EEG

ENIGMA reconstructs images from EEG signals after 15 min of calibration, using under 1% of previous methods' parameters.