ENIGMA: Reading Minds in 15 Min via EEG

Authors: Reese Kneeland, Wangshu Jiang, Ugo Bruzadin Nunes, Paul Steven Scotti, Arnaud Delorme, Jonathan Xu

Why It Matters

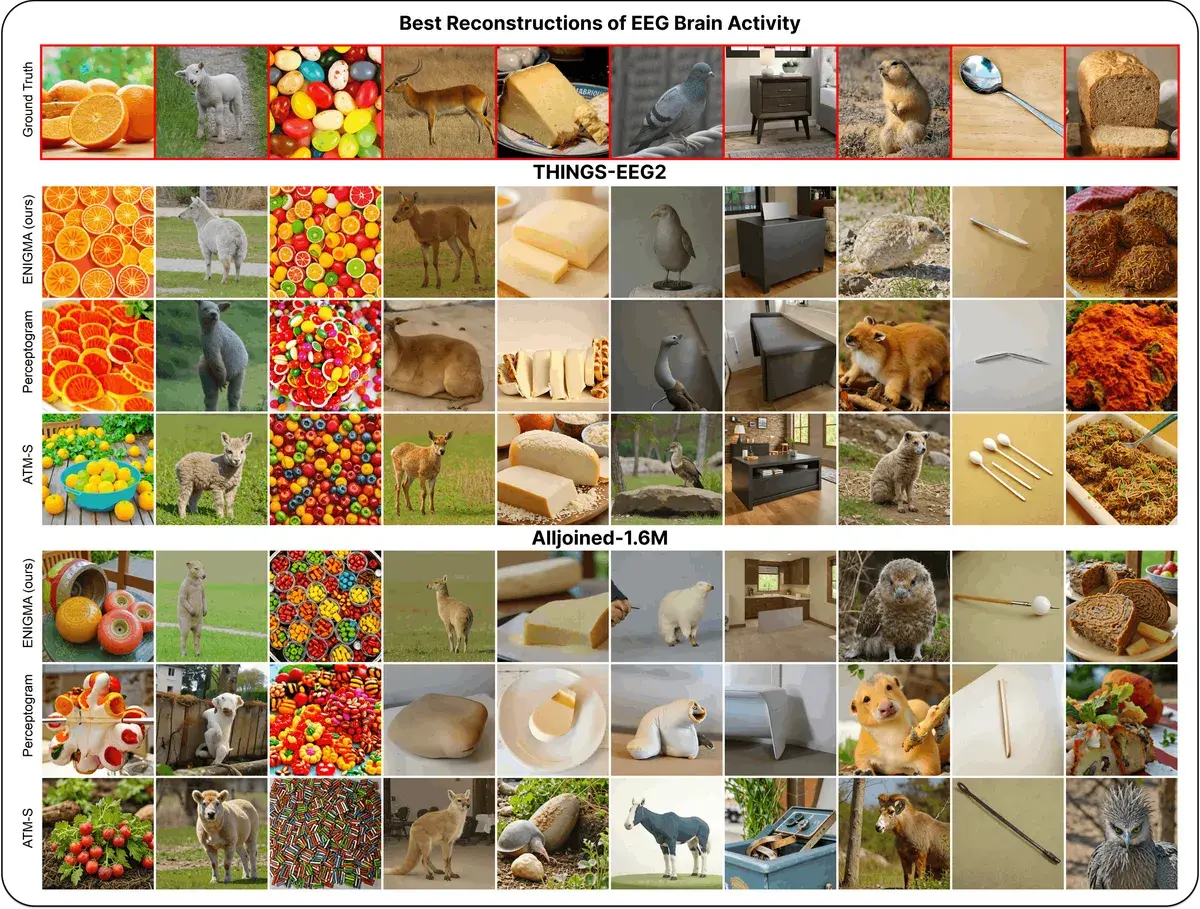

Imagine putting on a compact headset with electrodes, looking at a screen for 15 minutes — and after that, a computer can literally see what you see. Not blurry blobs, but recognizable images: oranges, sheep, furniture, faces.

Sounds like science fiction, but that’s exactly what ENIGMA demonstrates. And the most exciting part — it doesn’t require an MRI scanner costing tens of thousands of dollars. A consumer EEG headset you can buy online is enough.

The Core Idea

ENIGMA is a model that reconstructs images from electrical brain activity recorded through EEG (electroencephalography).

EEG (electroencephalography) — a method of recording electrical brain activity through electrodes placed on the scalp. Unlike MRI, it doesn’t require bulky equipment and can be used in everyday settings.

Three breakthroughs set ENIGMA apart from all previous approaches:

-

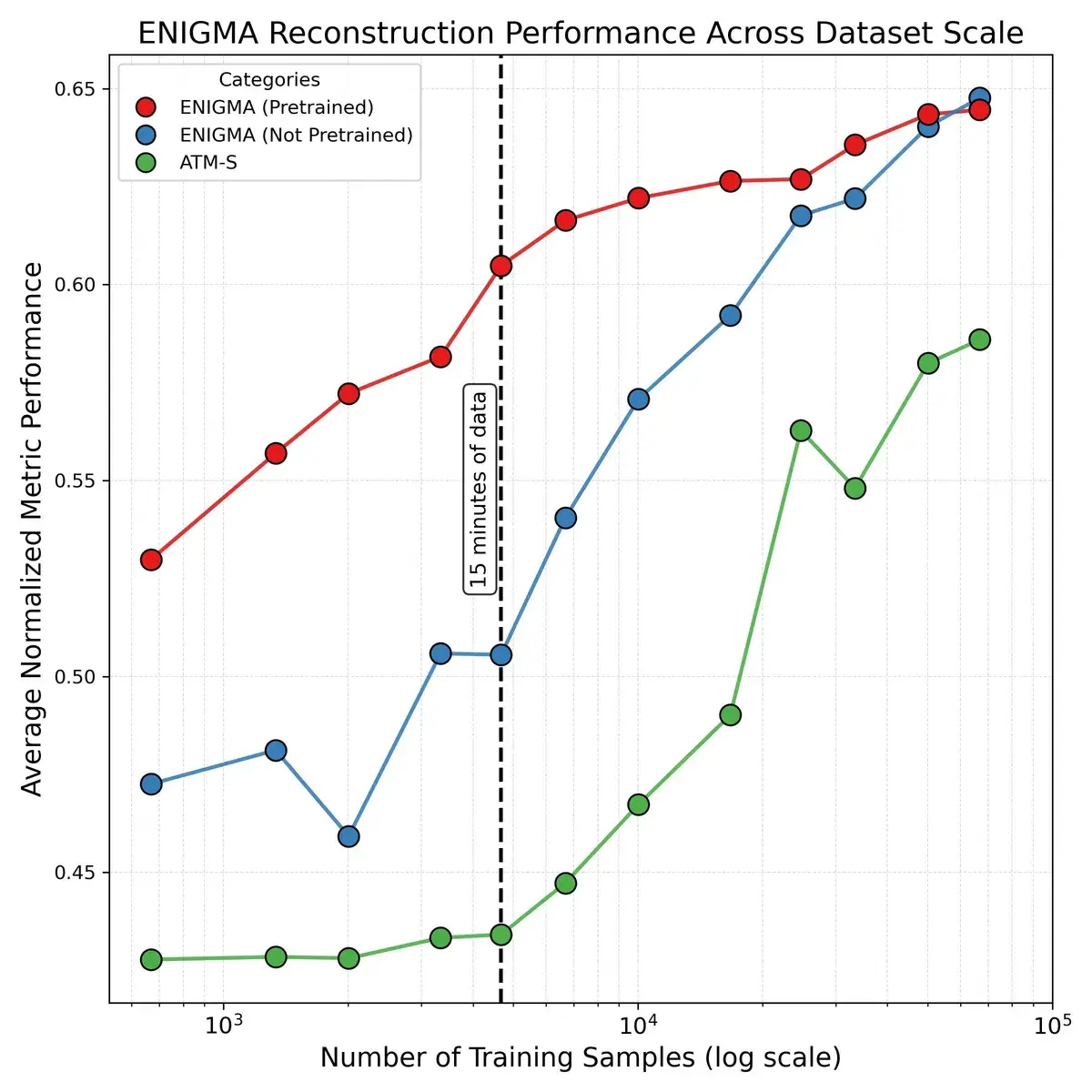

15 minutes instead of hours. Previous systems required hours of data for each new user. ENIGMA achieves superior results after just 15 minutes of calibration.

-

Less than 1% of parameters. The model is 165 times more compact than competitors when serving 30 users simultaneously — making real deployment on ordinary devices feasible.

-

Works with affordable sensors. Competitors break down on consumer EEG headsets ($2,200). ENIGMA maintains performance.

How It Works

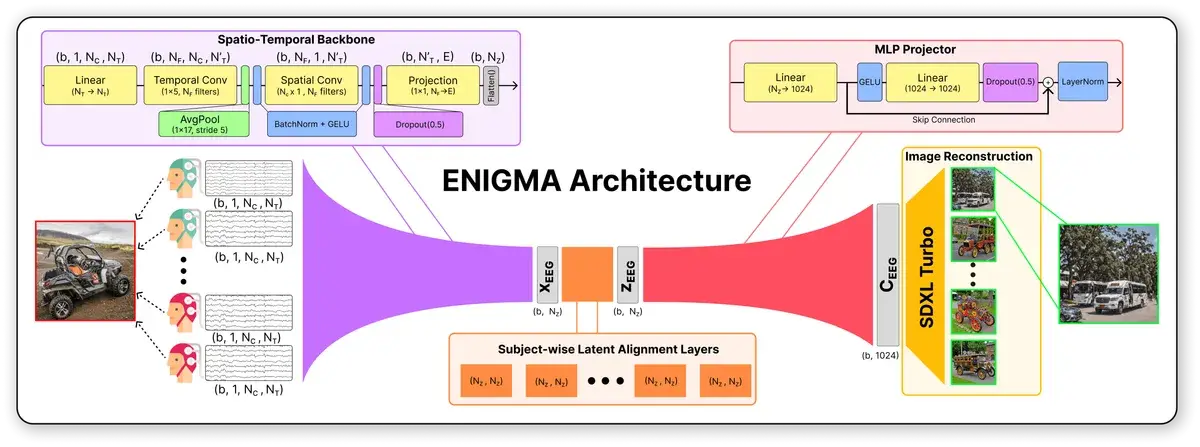

ENIGMA’s architecture consists of four sequential blocks:

1. Spatio-temporal backbone. The raw EEG signal (channels × time points) is processed as a 2D «image.» Temporal convolutions capture patterns over time, spatial ones capture inter-electrode relationships. Output: a compact 184-dimensional vector.

Backbone — the core component of a neural network that extracts key features from input data. All other components are built on top of it.

2. Subject-wise alignment layers. Each person’s brain generates slightly different signals. Instead of a separate model for each user, ENIGMA adds a tiny personal layer (184×184 weights) — this is the secret to parameter efficiency.

3. MLP projector. Transforms the 184-dimensional brain activity vector into CLIP’s 1024-dimensional space — a universal representation of visual information.

CLIP — a model by OpenAI that «understands» the relationship between images and text. It serves as a universal language between vision and reasoning for AI systems.

4. Image generator. Stable Diffusion XL Turbo converts the CLIP vector into a final image in just 4 diffusion steps.

Key insight: the authors dropped normalization of CLIP targets in the loss function (unlike competitors), preserving the embedding space geometry and eliminating the need for a separate «diffusion prior» training stage.

Results

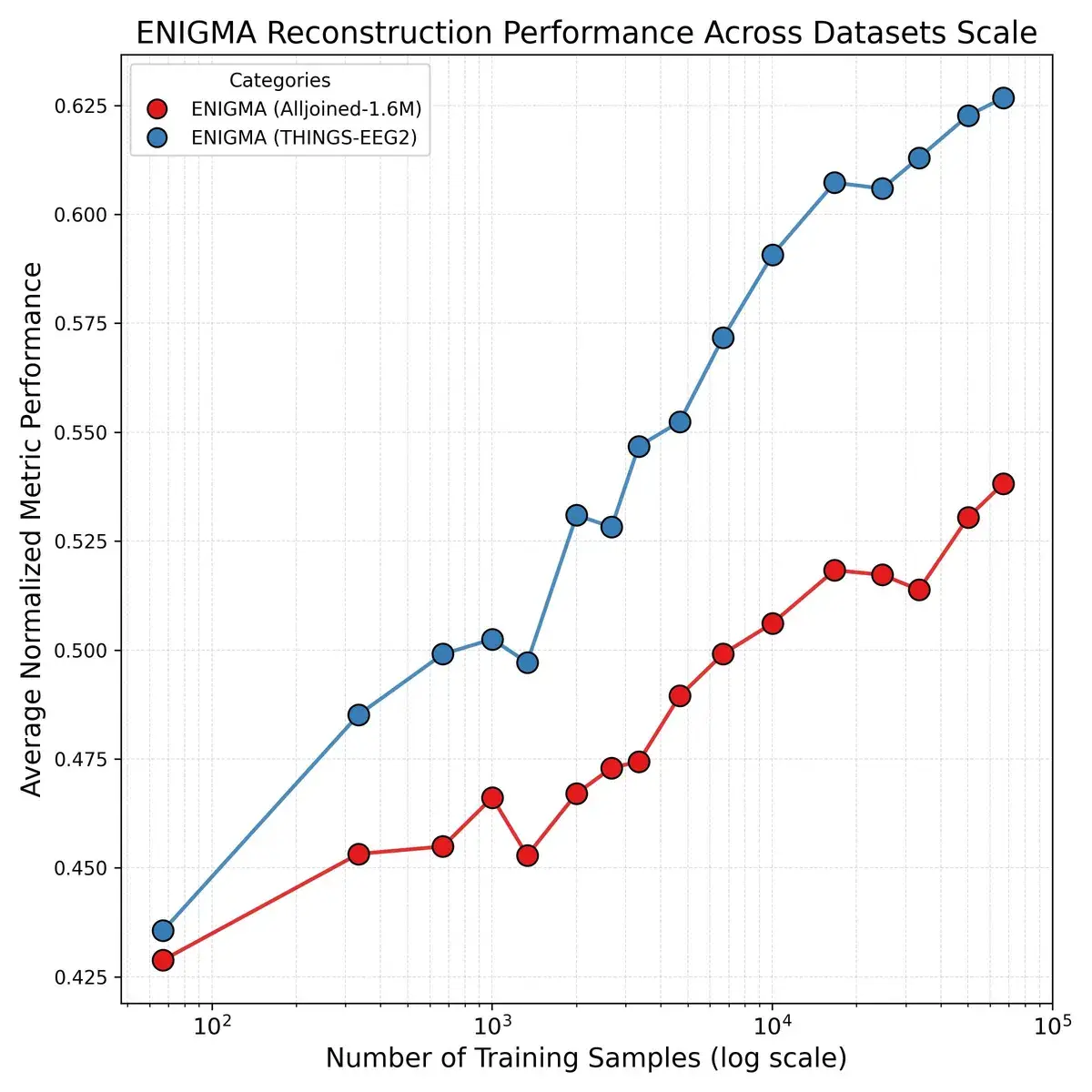

The model was tested on two datasets:

- THINGS-EEG2 — research-grade equipment (~$60,000), 64 channels, 1000 Hz

- AllJoined-1.6M — consumer headset (~$2,200), 32 channels, 250 Hz

| Metric | ENIGMA | ATM-S (competitor) | Perceptogram |

|---|---|---|---|

| CLIP accuracy | 80,3% | 55,0% | — |

| Human identification | 86,0% | 56,8% | — |

| Parameters (30 users) | 2.4M | 384M | 4,700M |

On consumer equipment (AllJoined-1.6M), ENIGMA achieves 70,7% human identification accuracy, while ATM-S scores only 52,2%.

Human evaluation. 545 volunteers participated in a blind test: they were shown an original image and two reconstructions, and asked to choose the more similar one. ENIGMA won across all conditions.

Critical Analysis

This paper is a preprint and has not yet undergone formal peer review.

ENIGMA’s most significant contributions sit at the intersection of scientific novelty and practical consequence. It is the first system to demonstrate competitive-quality visual decoding on consumer-grade EEG hardware — a distinction that matters because every prior method quietly assumed research-lab equipment as a prerequisite. Compressing the multi-user model to 2.4 million parameters (165 times fewer than the nearest competitor) is not merely an engineering achievement; it is the difference between a system that lives in a server rack and one that could run on a laptop. Equally important is the behavioral validation: involving 545 human judges in a blind evaluation test moves the results beyond the self-referential world of automated metrics and grounds them in perceptual reality. The authors also commit to reproducibility — the model runs on 8 GB VRAM consumer GPUs and code is promised for public release.

The limitations are real and worth stating plainly. Adding more training subjects does not raise the model’s performance ceiling: multi-subject scaling improves generalization but not peak quality, which suggests that the architecture may be hitting a fundamental limit in what scalp EEG can encode. The entire evaluation also takes place within a single narrow paradigm — viewing static images from the THINGS dataset — leaving open how ENIGMA would fare on other BCI tasks such as imagined speech, motor imagery, or emotional state decoding. And despite the consumer-hardware result, the quality gap between a $60,000 research setup and a $2,200 headset remains visible in the numbers; the technology works on affordable hardware, but not equally well.

The deepest open question is whether the model could decode mental imagery — images that exist only in a person’s mind, with no external stimulus present. That would be a qualitatively different capability, and nothing in the current work addresses it. Alongside the technical frontier sits an ethical one: a system that recovers visual experience from brain signals with 86% human-rated accuracy, running on inexpensive hardware, is not a distant hypothetical. The authors themselves call for a formal ethical framework to govern its use. That framework does not yet exist — and building it may prove harder than building the model.

Conclusions

ENIGMA is a step from laboratory demonstrations toward real brain-computer interfaces. When decoding visual experience requires only 15 minutes of calibration and a $2,200 headset, the technology stops being a toy for neuroscientists.

But with capabilities come risks. The authors honestly acknowledge: the ability to read visual experience from brain activity demands strict ethical frameworks — for privacy protection, transparency, and responsible deployment. Until such frameworks exist, every step forward in «mind reading» is simultaneously a promise and a warning.

Related Articles

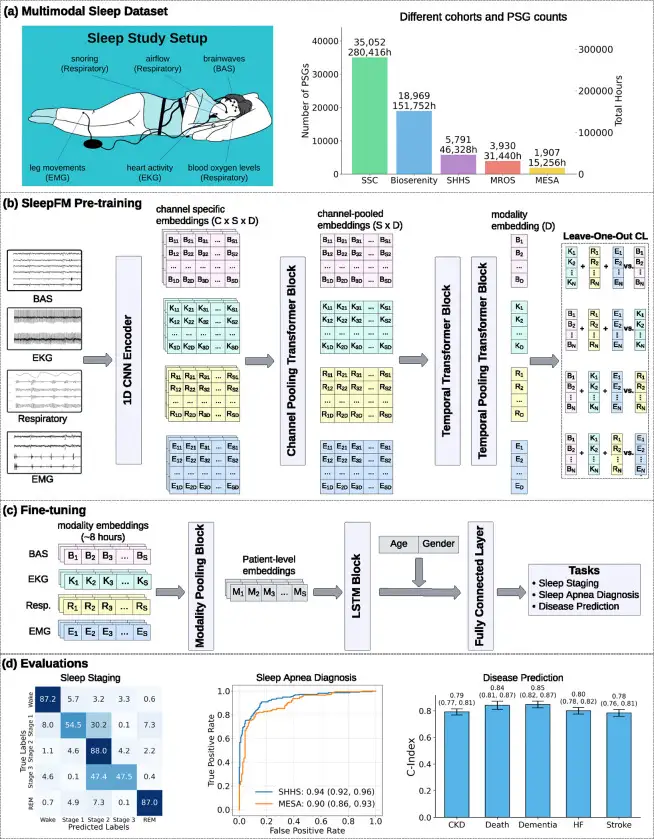

SleepFM: 130 Diseases From One Night of Sleep

Stanford trained a neural network on 585,000 hours of sleep data. It detects Parkinson's, dementia, and cancer years before symptoms appear.

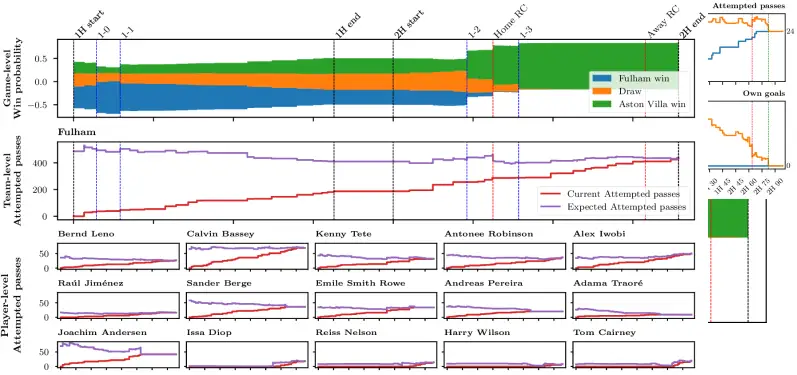

75,000 Predictions Per Match: AI in Football

Stats Perform researchers built an Axial Transformer neural network that generates 75,000 live predictions per football match with sub-second latency

CDG-2: A Galaxy Made of 99% Dark Matter

CDG-2 in the Perseus cluster is 99% dark matter, detected only through four globular clusters — the first galaxy ever found exclusively via its cluster population.