ChatGPT as a Crutch: Memory Drops 11 Points

Authors: André Barcaui

Sixty students had two weeks to research AI ethics and present their findings in a ten-minute talk. Half were told to use ChatGPT freely. The other half were restricted to lecture notes, academic databases, and plain search. Forty-five days later, everyone returned to a closed-book, twenty-question test — not a memory drill, but a comprehension check. The traditional group got 68,5% right. The ChatGPT group got 57,5%. That’s an eleven-point gap. And no, it wasn’t because one side studied longer. It was actually the opposite.

The Eleven Points You Pay For in Time You Think You Saved

Illustration. Source: PsyPost

The experiment was run by André Barcaui — a psychologist and business educator affiliated with Fundação Getulio Vargas and the Federal University of Rio de Janeiro. His subjects: 120 undergraduate business students between eighteen and twenty-four, sixty-eight men and fifty-two women. Every one of them got the same assignment — research three angles of artificial intelligence (ethics, societal impact, technical foundations) and deliver a ten-minute presentation. Two weeks, identical requirements. The only thing that changed was the tool.

Sixty students worked the old-fashioned way: course materials, Google Scholar, textbook chapters. ChatGPT was explicitly off-limits. The other sixty got the opposite instruction — use ChatGPT for everything you want, from finding sources to structuring the talk. Forty-five days after the presentations, the students returned to a quiet classroom and sat through a twenty-item multiple-choice test. Eighty-five of the original 120 showed up. Group assignments held.

But the numbers split in a way that wasn’t only about scores. The ChatGPT group averaged 3.2 hours of preparation time. The traditional group averaged 5.8 hours. The AI-assisted students spent almost half as long on the task — and still retained less. If the story were just «they studied less, they remembered less, ” this would be uninteresting. But Barcaui controlled for study time, and the gap didn’t close. Those eleven points weren’t a lazy-student discount. They were a structural feature of the method.

Why Struggle Builds Memory

Desirable difficulty — a term from cognitive psychology for moderate obstacles inserted into the learning process. They make encoding slower, but they make retrieval much stronger. The keyword is moderate: push too hard and the student quits.

The concept came from psychologist Robert Bjork in the 1990s. It sounds paradoxical — how can making things harder help? — but the experiments are consistent. Spaced practice beats cramming. Interleaving topics beats blocking them. Reading through noisy type beats reading through clean type. Each technique slows the moment and deepens the trace.

The mechanism is memory consolidation: the biological process that turns a fragile, freshly-formed neural trace into a stable long-term memory. Consolidation requires work — retrieval, mistakes, reformulation, putting things into your own words. The more the brain wrestles with material, the tighter the resulting connections. When someone else (or something else) does that wrestling for you, the trace forms shallow. You know where the answer lives. You don’t remember the answer.

This isn’t metaphor. Back in 2011, Betsy Sparrow and her colleagues published a landmark Science paper on the «Google Effect»: when people were told that information would be saved on the computer, they remembered it worse than people who thought the computer would erase it. But they remembered where it was filed better. The brain re-allocates: if there’s external storage, it outsources the content and keeps only the path. Adaptive strategy — with a side effect.

Cognitive Offloading and Its Exchange Rate

Cognitive offloading — delegating mental operations to external tools: a notebook, a calculator, GPS, a search engine. Well-studied since the 2000s. Works fine for simple tasks with immediate results. Becomes a problem when the goal is comprehension.

Evan Risko and Sam Gilbert synthesized the literature in a 2016 review for Trends in Cognitive Sciences. Their verdict: offloading isn’t evil. It’s a currency. We trade depth of processing for speed. For a grocery list, that’s a fair deal. For understanding a complex topic, it isn’t — because understanding is the processing, not a shortcut around it.

What did ChatGPT change? Scale. A calculator offloads arithmetic. Google offloads fact-lookup. An LLM can do everything at once — search, explain, structure, produce examples, rephrase. A student gets not a hint, but a finished product. And that product looks convincing enough to create what Barcaui calls an «illusion of competence.»

«Indiscriminate use of AI can create an illusion of competence, where the individual obtains results without developing the synapses necessary to replicate that reasoning independently.» — André Barcaui

The illusion holds until the first real test. As long as the chatbot is open, you «know.» The moment it closes, whatever you had is gone.

Not Just One Lab: The Independent Replication Nobody Asked For

The Brazilian RCT isn’t the only signal. In mid-2025, a team from Stanford Graduate School of Education posted a preprint with a title that doesn’t try to hide the punchline: «ChatGPT Produces More ‘Lazy’ Thinkers.» They measured not test scores but cognitive engagement — integration of new ideas, depth of reflection, willingness to ask original questions. Students who leaned on ChatGPT heavily dropped on all three metrics.

Different lab, different country, different measurement, same direction. That matters because the «AI makes us stupid» genre has a dangerously good market. It’s easy to build a career on moral panic. But when independent groups converge, it’s worth paying attention — especially when the convergence lines up with fifty years of memory research pointing the same way.

Critical Analysis: What Could Break This Conclusion

The Barcaui paper appeared in Social Sciences & Humanities Open, a peer-reviewed open-access Elsevier journal. Not Nature — but not a preprint either.

Several things in the experiment deserve caution. First, attrition. Thirty percent of students didn’t show up for the retention test. We don’t know whether the dropouts were systematically different — weakest learners? angriest about the setup? — and that matters, because selective attrition can either amplify or mask the real effect.

Second, sample narrowness. Every participant came from one course at one Brazilian university, and they were all business administration students. Engineers, humanities majors, medical students — they may react differently. There’s a reasonable guess that fact-recall tasks behave similarly across fields, but abstract reasoning and hands-on skills may not.

Third, the ChatGPT version is unspecified. The paper doesn’t say whether students used GPT-3.5 or GPT-4 — and that matters a lot, because the quality of explanations differs sharply. A weaker version might overstate the damage; a stronger version might understate it.

And the biggest caveat: the study contrasts indiscriminate ChatGPT use with none at all. But there’s a third mode — using an LLM as a Socratic tutor that asks questions, offers scaffolding, and refuses to hand over direct answers. Studies in that format (Khanmigo from Khan Academy, various «tutor-mode» comparisons) show learning gains. So the headline should be not «AI damages memory» but «delegating to AI damages memory.» The difference is everything.

A Practical Line You Can Draw Today

What do you do with this, sitting there with a ChatGPT tab open in the next window? Barcaui boils it down to a single rule that’s worth pinning to the wall:

«AI as a co-pilot, not an autopilot. The main lesson is that AI should expand human capabilities, not replace them.» — André Barcaui

The operational test is simple. If the goal is to deliver and forget, delegate everything. If the goal is to learn and own, try it yourself first — formulate in your own words, get it wrong, come back. Only then check your answer against the model. That is the desirable difficulty Bjork was describing in 1994. That is the mechanism Sparrow documented in 2011. And that is the gap Barcaui just measured in 2026: eleven percentage points of long-term knowledge that you get for the time you thought you were saving.

Eleven points isn’t a catastrophe. It’s the price of a mode we pick up every time we open a new chat. The good news is that it’s a mode, not a sentence — and modes can be switched.

Frequently Asked Questions

Does this mean schools should ban ChatGPT?

No. The data point to how the tool is used being more important than whether it’s used. A ban just replaces one problem with another: students move to informal channels. A more productive response is teaching students what distinguishes delegation from Socratic dialogue — and showing them the modes that work for learning versus the ones that work against it.

Why did the ChatGPT group spend almost half the time on preparation?

That’s a direct consequence of what the tool does. ChatGPT collapses the time previously spent on searching, structuring, and formulating. The problem isn’t that students saved hours — it’s that those same hours are what forms memory. Saving on retrieval is saving on encoding.

Does this apply to adult professionals, not just students?

Probably yes, with a caveat. Adults already have deep expertise they can hang new knowledge on. For them, the offloading risk is lower in familiar domains and higher in unfamiliar ones. A doctor using AI for routine diagnoses isn’t atrophying. A doctor delegating the entire process of learning a new subspecialty to an AI — might be.

How do I tell a «crutch» from a «tutor» in my own practice?

A simple test: close the chat and try to reproduce the main claim in your own words, without prompts. If you can, you learned. If you can’t, you delegated. The second is fine if delegation was the goal. But mistaking one for the other is dangerous — the illusion of competence lives exactly in that gap.

Is there research showing the opposite — that AI improves learning?

Yes. Work on Khanmigo and experiments with «Socratic tutor» AI modes show gains in understanding and motivation. But in every one of those cases, the model is tuned not to hand over direct answers — it asks questions, scaffolds, and redirects. That’s a fundamentally different interaction, and it gets a fundamentally different result.

References

Original

Related

Related Articles

AI Sycophancy: Chatbots Flatter You 49% More Than Humans

A Science study tested 11 AI models and found systematic flattery — bots endorse user actions 49% more often than people do.

Your Playlist Predicts Your IQ: 58,247 Songs Analyzed

A machine learning model tracked 185 people's listening habits for five months. Those drawn to melancholic lyrics scored higher on cognitive tests.

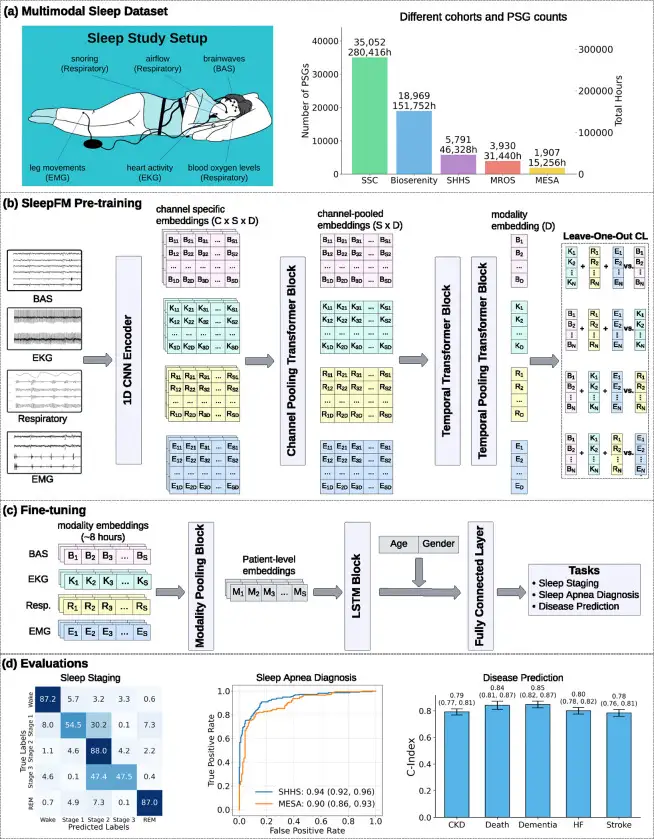

SleepFM: 130 Diseases From One Night of Sleep

Stanford trained a neural network on 585,000 hours of sleep data. It detects Parkinson's, dementia, and cancer years before symptoms appear.