AI Conquered Mathematics in Two Years

Authors: Alex Wilkins

Here is a timeline that should not exist. In early 2024, large language models routinely failed at high school algebra — mixing up signs, inventing rules, producing confident nonsense. By mid-2025, an AI system won gold at the International Mathematical Olympiad, solving problems that stumped all but a handful of the world’s brightest teenage prodigies.

«A couple of years ago, they were basically useless for even solving high school math problems, and now they can sometimes solve problems that really appear in the research life of a mathematician, ” says Daniel Litt, a mathematician at the University of Toronto. In March 2025 he wagered that AI had only a 25% chance of writing a research-grade math paper by 2030. One year on, he conceded publicly: „I now expect to lose this bet.“

The Last Fortress Falls

Chess surrendered to machines in 1997. Go held out until 2016. Protein folding capitulated in 2020. Each time, experts said: «Yes, but mathematics is different.» Proving a theorem requires invention, not just calculation. You need to see a structure no one has described, choose a strategy from infinite options, and sense — without proof — that a proof exists. That kind of creativity, the argument went, was uniquely human. The argument was wrong.

Silver, Then Gold: A Two-Year Timeline

In July 2024, Google DeepMind unveiled AlphaProof — a system trained on 80 million formal mathematical statements in the Lean proof language.

Lean is a formal verification language where every step of a proof is machine-checked. If a proof compiles, it is guaranteed correct.

AlphaProof solved 4 of 6 problems at the 2024 International Mathematical Olympiad, including Problem 6 — the hardest, cracked by only a few dozen human contestants out of thousands. Combined with AlphaGeometry 2, a geometry specialist, the system scored at silver-medal level. The result was published in Nature.

The geometry module proved even more striking in retrospective testing. AlphaGeometry 2 solved 84% of all IMO geometry problems from 2000 to 2024 — outperforming the average gold medalist in that subdomain.

Six months later, OpenAI fielded a system that earned gold at IMO 2025. Then in early 2026, NVIDIA released Nemotron-Cascade 2: a 30-billion-parameter model built on a «mixture of experts» architecture — meaning only a small specialist fraction of the network activates for any given problem, here just 3 billion parameters that took gold at three separate competitions — IMO, IOI (informatics), and the ICPC World Finals (team programming). It achieved this with 20 times fewer parameters than DeepSeek.

Two years. From failing algebra to triple gold at world championships.

How a Machine Proves Theorems

AlphaProof operates on a principle that is simultaneously simple and counterintuitive. The system does not «understand» mathematics in any human sense. It plays a game.

Reinforcement learning is a method where an agent learns through trial and error, receiving rewards for correct steps. AlphaGo learned Go this way. AlphaProof learned to prove theorems.

Each proof is framed as a sequence of moves in the Lean language. Each move applies a lemma, substitutes a variable, or invokes induction. Lean verifies every step instantly: either it is logically sound, or it is not. The system’s task is to find a sequence of moves leading from axioms to the target statement.

This creates a closed loop: AI proposes a proof, Lean verifies it, and the outcome feeds back into training. No human reviewer needed, no curated expert dataset — the system teaches itself by playing millions of «proof games, ” each one refining its intuition about which moves lead somewhere productive.

AlphaEvolve took a different path. Developed by DeepMind in collaboration with Fields Medalist Terence Tao, it uses an evolutionary approach: generate mathematical constructions, evaluate them, mutate the best, repeat. Across 67 problems in analysis, combinatorics, and number theory, the system reproduced the best known solutions 75% of the time — and improved on some.

Collaborator, Not Successor

The panic headlines — «AI Will Replace Mathematicians» — miss the mark. But so does pretending nothing has changed.

Terence Tao predicted in 2023 that 2026-level AI would become «a trustworthy co-author in mathematical research.» His forecast is materializing. Working with AlphaEvolve, he found that the system generates useful constructions that a mathematician then directs and interprets. The machine proposes; the human disposes.

Kevin Buzzard, a professor at Imperial College London and an evangelist for formal verification, sees the combination of AI and Lean as a new division of labor. The machine handles formalization — the tedious translation of an idea into rigorous proof. The human focuses on what algorithms still cannot do: choosing a direction, formulating a conjecture, sensing which problem is interesting.

The most unexpected effect is democratization. Amateurs without mathematics degrees have started solving problems that professionals could not close for decades. AI bridges the gap in technical training: it generates formal steps while the human sets the direction. Think of it as a calculator for arithmetic — except at the level of olympiad theorems.

The Gaps in the Gold Medal

Four out of six. That was AlphaProof’s IMO 2024 score. Impressive — but two problems survived. Both were combinatorial: they required inventing a construction from scratch rather than navigating toward a known target. Every major AI math system shares this blind spot.

Why? Reinforcement learning thrives on clear feedback. Lean delivers exactly that for proofs — either the code compiles or it does not. But formulating a new conjecture, deciding which problem matters, sensing that a particular direction will be fruitful — none of these have an objective loss function. All key results have been published in peer-reviewed venues (Nature, arXiv with Lean verification), though reproducibility depends on access to proprietary compute at DeepMind, OpenAI, and NVIDIA.

There is a subtler gap, too. Every IMO problem comes with a guarantee: a solution exists. Real mathematical research offers no such comfort. Researchers spend months — sometimes years — discovering that their question was malformed. Moving from olympiad puzzles to open problems is not an incremental step but a qualitative leap, and no system has made it yet.

A New Arithmetic of Proof

Litt’s bet is a small story about a large transformation. Two years ago, a mathematician could confidently say: «My field is safe.» Today, the accurate statement is: «My field is changing.»

Not replacement. Augmentation. Every time mathematics gained a powerful new tool — computer algebra in the 1960s, numerical methods in the 1940s — the discipline did not shrink. It expanded. Problems that could not even be stated before became tractable.

Litt expects to lose his wager. But for mathematics as a whole, his loss may be a win.

FAQ

Can AI fully replace mathematicians?

No. Current systems excel at proving formulated statements but cannot pose problems, choose research directions, or judge the elegance of a theory. AI is a powerful tool, not an autonomous researcher. The closest analogy is the calculator: it did not replace mathematicians but changed what they spend their time on.

What is AlphaProof and how does it differ from ChatGPT?

AlphaProof is a specialized Google DeepMind system for formal mathematical proofs. Unlike ChatGPT, which generates text and can hallucinate, AlphaProof works in the Lean language where every proof step is machine-verified. If a proof compiles, it is guaranteed correct. ChatGPT can produce convincing but false mathematics; AlphaProof cannot.

Why was mathematics considered safe from AI?

Mathematical proof requires creative intuition — seeing a path to a solution, choosing a strategy, inventing a construction. This differs from pattern recognition in data, where AI succeeded earlier. The assumption was that without «understanding» the meaning of formulas, a machine could not prove theorems. AlphaProof demonstrated that reinforcement learning within a formal environment can bypass this barrier.

What kinds of math problems can AI still not solve?

Combinatorial problems requiring invention of constructions from scratch remain difficult. AI also cannot formulate new conjectures or determine which problems are worth pursuing. Millennium Prize Problems (Riemann Hypothesis, P vs NP) are still out of reach.

References

Related

Related Articles

Humanity's Last Exam: The Test AI Keeps Failing

2,500 questions no AI can Google. GPT-4o scored 2.7%, humans hit 90%. Inside the hardest AI benchmark and its 30% error rate.

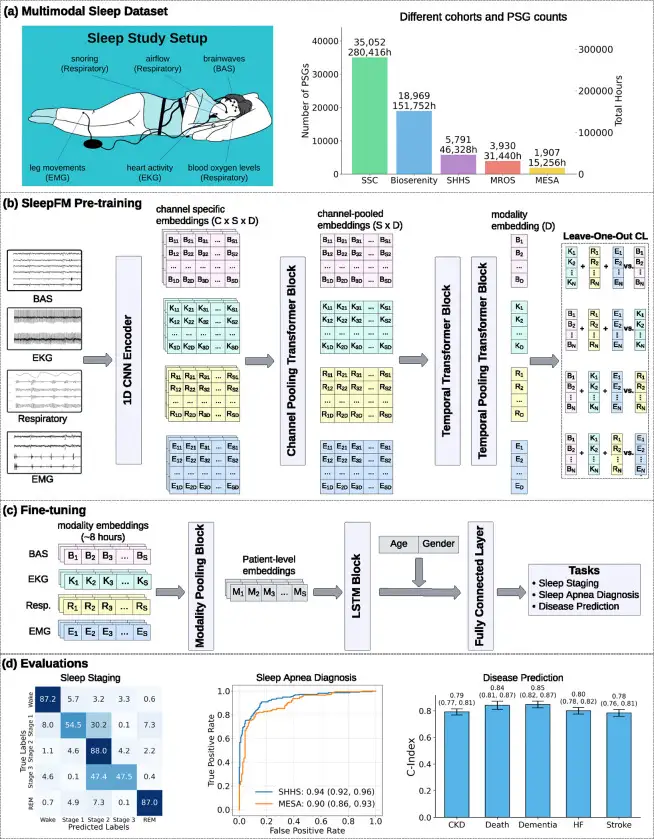

SleepFM: 130 Diseases From One Night of Sleep

Stanford trained a neural network on 585,000 hours of sleep data. It detects Parkinson's, dementia, and cancer years before symptoms appear.

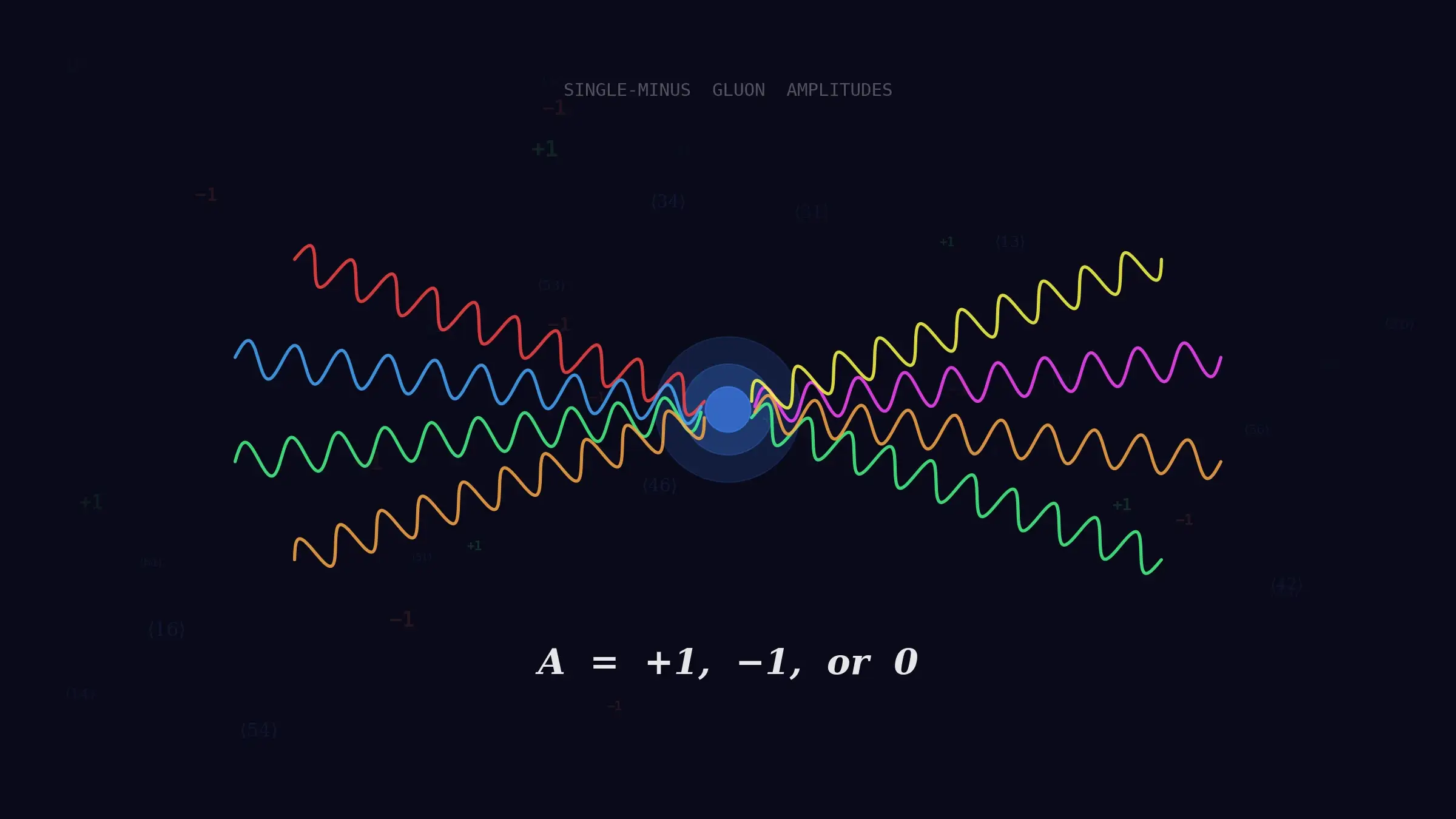

GPT-5.2 Cracked a 40-Year Gluon Mystery

OpenAI's model conjectured a formula for gluon amplitudes assumed zero since 1986. Physicists from Harvard and Cambridge confirmed it was right.